A brand new computing paradigm—thermodynamic computing—has entered the scene. Okay, okay, possibly it’s simply probabilistic computing by a brand new title. They each use noise (equivalent to that brought on by thermal fluctuations) as an alternative of combating it, to carry out computations. However nonetheless, it’s a brand new bodily method.

“In the event you’re speaking about computing paradigms, no, it’s this identical computing paradigm,” as probabilistic computing, says Behtash Behin-Aein, the CTO and founding father of probabilistic computing startup Ludwig Computing (named after Ludwig Boltzmann, a scientist largely answerable for the sphere of, you guessed it, thermodynamics). “But it surely’s a brand new implementation,” he provides.

In a current publication in Nature Communications, New York-based startup Normal Computing detailed their first prototype of what they name a thermodynamic pc. They’ve demonstrated that they’ll use it to harness noise to invert matrices. In addition they demonstrated Gaussian sampling, which underlies some AI functions.

How Noise Can Help Some Computing Issues

Conventionally, noise is the enemy of computation. Nevertheless, sure functions truly depend on artificially generated noise. And utilizing naturally occurring noise may be vastly extra environment friendly.

“We’re specializing in algorithms which might be in a position to leverage noise, stochasticity, and non-determinism,” says Zachery Belateche, silicon engineering lead at Regular Computing. “That algorithm area seems to be large, every part from scientific computing to AI to linear algebra. However a thermodynamic pc just isn’t going to be serving to you test your e-mail anytime quickly.”

For these functions, a thermodynamic—or probabilistic—pc begins out with its parts in some semi-random state. Then, the issue the person is making an attempt to unravel is programmed into the interactions between the parts. Over time, these interactions permit the parts to come back to equilibrium. This equilibrium is the answer to the computation.

This method is a pure match for sure scientific computing functions that already embrace randomness, equivalent to Monte-Carlo simulations. It is usually nicely suited to AI image generation algorithm stable diffusion, and a kind of AI often known as probabilistic AI. Surprisingly, it additionally seems to be well-suited for some linear algebra computations that aren’t inherently probabilistic. This makes the method extra broadly relevant to AI coaching.

“Now we see with AI that paradigm of CPUs and GPUs is getting used, however it’s getting used as a result of it was there. There was nothing else. Say I discovered a gold mine. I need to mainly dig it. Do I’ve a shovel? Or do I’ve a bulldozer? I’ve a shovel, simply dig,” says Mohammad C. Bozchalui, the CEO and co-founder of Ludwig Computing. “We’re saying this can be a completely different world which requires a unique software.”

Regular Computing’s Strategy

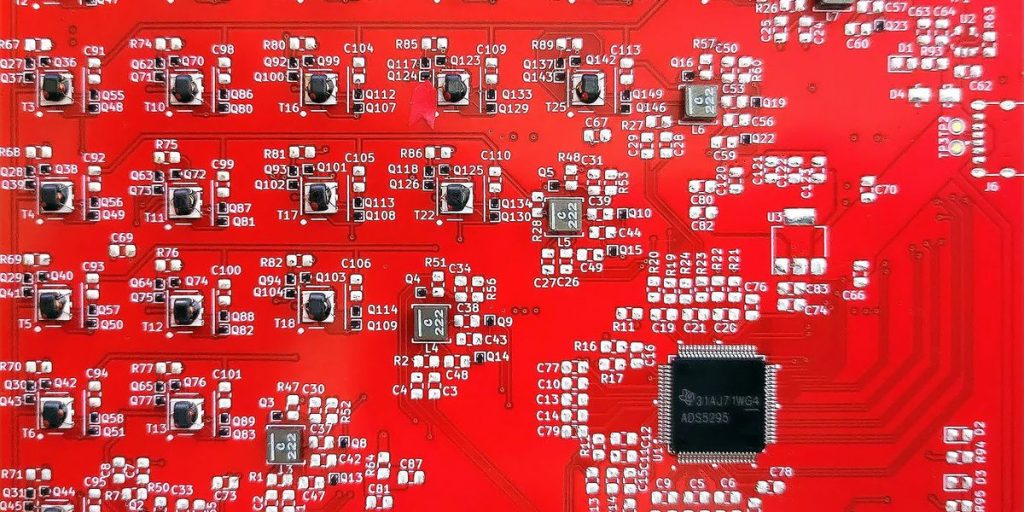

Regular Computing’s prototype chip, which they termed the stochastic processing unit (SPU), consists of eight capacitor-inductor resonators and random noise turbines. Every resonator is related to one another resonator by way of a tunable coupler. The resonators are initialized with randomly generated noise, and the issue underneath examine is programmed into the couplings. After the system reaches equilibrium, the resonator models are learn out to acquire the answer.

“In a standard chip, every part could be very extremely managed,” says Gavin Crooks, a employees analysis scientist at Regular Computing. “Take your foot off the management little bit, and the factor will naturally begin behaving extra stochastically.”

Though this was a profitable proof-of-concept, the Regular Computing workforce acknowledges that this prototype just isn’t scalable. However they’ve amended their design, eliminating tricky-to-scale inductors. They now plan to create their subsequent design in silico, somewhat than on a printed circuit board, and count on their subsequent chip to come back out later this 12 months.

How far this expertise may be scaled stays to be seen. The design is CMOS-compatible, however there’s a lot to be labored out earlier than it may be used to unravel large-scale real-world issues. “It’s superb what they’ve achieved,” Bozchalui of Ludwig Computing says. “However on the identical time, there’s a lot to be labored to actually take it from what’s as we speak to business product to one thing that can be utilized on the scale.”

A Completely different Imaginative and prescient

Though probabilistic computing and thermodynamic computing are basically the identical paradigm, there’s a cultural distinction. The businesses and researchers engaged on probabilistic computing nearly solely hint their educational roots to the group of Supryo Datta at Purdue College. The three cofounders of Regular Computing, nonetheless, haven’t any ties to Purdue and are available from backgrounds in quantum computing.

This ends in the Regular Computing cofounders having a barely completely different imaginative and prescient. They think about a world the place completely different sorts of physics are utilized for their very own computing {hardware}, and each downside that wants fixing is matched with probably the most optimum {hardware} implementation.

“We coined this time period physics-based ASICs,” Regular Computing’s Belateche says, referring to application-specific integrated circuits. Of their imaginative and prescient, a future pc could have entry to traditional CPUs and GPUs, but in addition a quantum computing chip, a thermodynamic computing chip, and some other paradigm folks may dream up. And every computation will likely be despatched to an ASIC that makes use of the physics that’s most applicable for the issue at hand.

From Your Web site Articles

Associated Articles Across the Net